Considered Harmful Test Driven Development

the development process that can be named is not the true development process

None of what I post is "ai" generated. "AI" does not exist and what is being called "ai" vomits up misinformation as facts mixed in with a sprinkling of actual facts to make it extremely harmful to use.

What you read here, I wrote.

TL;DR

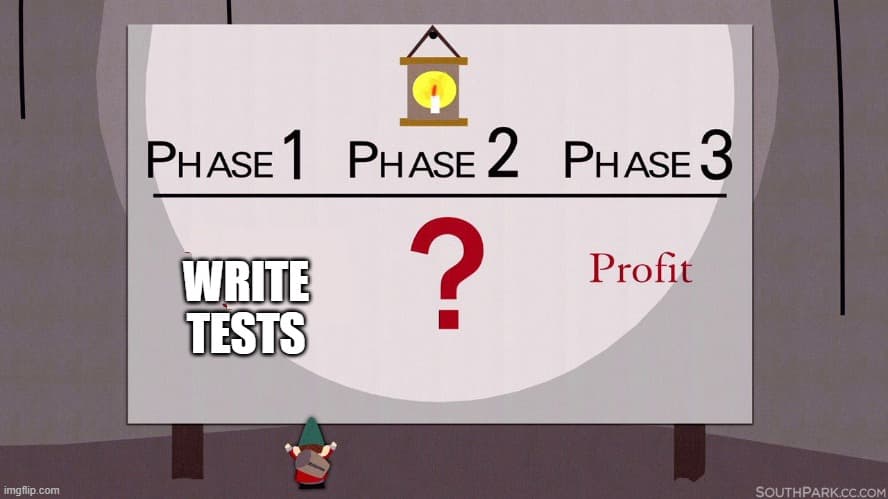

Dogmatic Driven Adherence to things is bad. Especially things that are like this idea. Testing is good, more testing is more better, testing everything is all the best. Not! TDD is the ultimate "created by consultants" to sell certificates, courses and to bill hours.

The stated goal is diametrically opposed to what it aspires to.

Software engineer Kent Beck, who is credited with having developed or "rediscovered"[1] the technique, stated in 2003 that TDD encourages simple designs and inspires confidence.

Reality

TDD encourages writing complex speculative code that gets thrown away, never used or worse, hangs around in your code base as "zombie" code whose only function is to make you feel good at the end of the day about not getting anything useful actually done. Because LOC ... 100% CC ... writing code is fun, solving actual problems is work.

For any non-trivial project, any intermediate experienced programmer can tell you writing tests before you write the code as a way to design the code is a non-starter.

Now, who in their right mind would promote something so proven not viable, maybe someone who really likes writing testing frameworks more than actual applications?

Tests are code*, so when do you write the tests that test the tests?*

On a team, that is being held to a budget and a deadline.

Writing tests as a design tool is ridiculous in practice. Especially when in the real world, where the requirements are malleable and you are working on an "agile" team.

TDD target audience #beginners looking for a success without having to think.

But, this TDD idea is not aimed at intermediate or expert programmers, it is aimed at beginners. The very audience that does not know any better and thinks this sounds like a good way for them to focus and structure their design process. When it is just a Turing tarpit of wasted time.

"Speed is important in business, time is money ..."

They do the course or video series on TDD that they discover and it "makes so much sense", in the strawman case of a boot camp "TODO app".

Then when they can not get anything done, are behind schedule and over budget and all they have to show at the end of each sprint is a bunch of tests that "fail successfully", they are told, they are just not "doing it right", they need more paid courses, and need to convince their team and/or management to drink the TDD Kool-Aid.

They just need to evangelize to the business that having as close to 100% test coverage of an 80% working application is better than having a 100% working app with 20% test coverage.

As a contractor, I worked a couple projects where you could not merge any code at the end of a sprint that did not have 100% coverage, yes 100%, that meant you had to write or generate tests for every toString() method on every class, yes this was Java, it was 2005/6, the height of this madness that perpetuates to today.

It was the most moronic waste of time, but hey, I was just a warm butt in a a seat behind a keyboard pressing keys. As such, all the badged employees delegated all this useless test code to me; the most expensive person on the team, because the other work was more fun and more important for clout.

I ended up having to do redo most of their stuff anyway because I was actually hired to write all the complex concurrent and cache code that they could not get to work without dead locking or working on stale data.

Here is what and how you need to test

As you are writing the logic for your application, you are by definition writing your testing in your code. To make sure it does what you need it to do. You write a function, write code that calls it and iterate until it does what you intend it to do. Using a step debugger hopefully!

Once it is working, it is tested, it does not need to be tested every build after that until it gets changed!

Then you move onto the next thing until you get your application working and ready for production.

Here is when you need to write formal tests

1. Bugs

When your application is working, and a bug is found you write a test that exposes the bug, then you fix the bug until the test passes.

2. Logic Changes - Addition/Deletion

When your application logic needs to change or be added to, you write a test to show that the current behavior works. This way when you change something, you can be sure that you did not break the behavior you need to keep when you added the new behavior you needed. Assuming you testing the new code, no need to write any tests for the new code as long as it does what you intended and your existing logic that you needed to keep still works.

3. Logic Changes - Modifying Behavior

This one is similar to the last one, if you are changing a behavior completely, then there is no real reason to write a formal test. This is logically the equivalent of deleting the existing logic and writing new logic, which you will test as you write it by default.

If you are keeping some of the existing behavior and changing some, then write a test to make sure the behavior you want to keep is not affected. Then you can modify with this safety net.

Once your tests pass and you are ready for production.

What I do is then disable all the tests. Running tests on code that is not modified on every build is just willful ignorance at best.

Tests Are Code

Code needs to be maintained. Code cost money and time. Especially "non-functional" code, that is code that does make it to production. Test code is code and it has to be maintained.

Clichés exist for a reason

The best code is code that does not need to be written, the next best code is code that you delete. Tests that you do not write are the best code, test you delete is even better. Version Control exists for a reason.

This presses your buttons?

Follow so you can tell me how wrong I am when I post the Considered Harmful series entries about BDD, DDD and all the other Consultant Driven Design religions.